In this article, you’ll learn:

Once you join the team of Amazon S3 users, you’re likely to become a fan of AWS storage forever. Indeed, Amazon S3 is good storage with top security, high scalability, and cheap prices. But there is always a “but”.

Like any other storage, Amazon S3 could be slow. And somehow, this always happens unexpectedly: when you need to upload assets, and your client is waiting on that side of the screen. It’s also possible that glitches occur when you’re uploading important documents to send them over to your manager.

No worries! We will help you solve all your upload issues in Amazon S3. In this post, we’ll review the best practices for S3 transfer acceleration as well as the best tools to speed up your uploads.

A little background

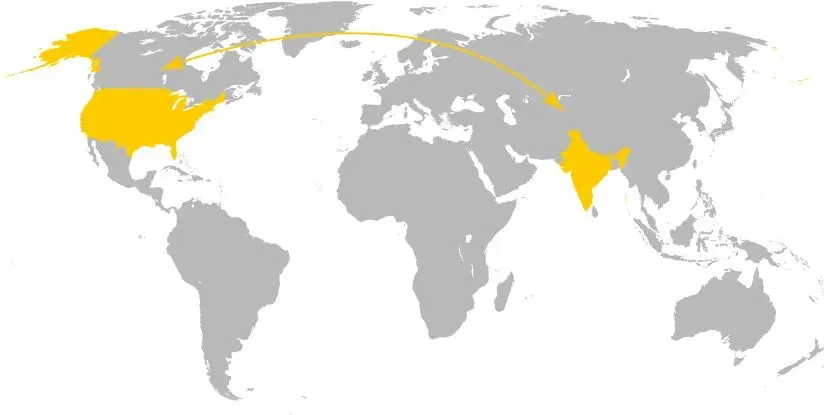

Region-specific buckets (read: root folders) are the core particularity of Amazon S3. The AWS storage allows you to choose the location where to store your assets. And by opting for the closest option to you geographically, you can improve the performance of your storage, cut costs, and reduce response time.

Still, regions can also create a bottleneck, especially if your company is expanded worldwide. Just imagine that your chosen region is in the US, but your users reside in India. With centralized S3, they won’t get real-time updates in this case. Instead, they’ll need to wait until the assets are uploaded.

This is the most typical scenario when you need to take measures to increase transfer speed in your Amazon S3 storage. Another use case includes when you move batches of data on a regular basis over continents.

Let’s consider how you can impact the situation...

How to optimize your Amazon S3 transfer speed

1) Pay attention to regions and connectivity (pretty obvious, right?)

As regions affect network latency the most directly, it’s better to think about them in advance. Amazon itself recommends using the region closest to you. So don’t be lazy to research the best location for your assets when creating a new bucket in Amazon S3.

Low bandwidth is another common cause of S3 data transfer problems. Thus, be ready to check your Internet and do the basic troubleshooting. Running a speed test, rebooting the router, and checking the software setting is the smallest you can do to increase your bandwidth.

Two more factors that matter are instance types and concurrency level. Under the term “instance” in AWS, we understand a virtual machine running on a remote host machine. You can compare it to an operating system running on your desktop computer or the local server.

So it’s better to choose your instance type depending on your bandwidth network connectivity requirements. As a result, this can minimize your performance issues.

As for concurrency, this refers to the number of requests done simultaneously. Overall, S3 storage is highly scalable, which means that you can upload lots of data at once on the condition of a big pipe and enough instances.

Still, each of your S3 operations impacts latency. And if you’re dealing with too many objects (read: upload lots of files), your storage works them up one at a time. And so different performance issues may occur at the concurrency level.

2) Consider your data lifecycles

The next piece of advice is to pay more attention to data organization. And your starting point here is data lifecycles. Most of your datasets will expire over time, and you unlikely want archives or raw files to store forever. They’ll occupy space in your S3 storage and affect its productivity.

At the same time, it’s impossible to track the expiration date of every single asset manually. Even if you could, your team can put spikes in the wheels, managing your storage together with you.

How about adopting a tech solution to solve your file organization issues? It seems like a perfect task for Pics.io Digital Asset Management. Working on top of your S3, the tool will help you categorize your storage on your data lifecycles. You’ll find your assets quicker and easier and know all the end dates.

(Google Drive is another storage option for you. Plus, not so long ago, Pics.io also released its own storage & became known as an all-in-one DAM solution.)

3) Split the transfer into multiple uploads / downloads

Still, the mentioned measures are rather preventive. Now let’s think about what to do if you need to upload large amounts of data here and now, but your transfer speed in Amazon S3 is low.

A good idea is to complete parallel uploads / downloads. In this case, you need to break your file into chunks. Then, upload these chunks simultaneously using the AWS Command Line Interface. This method will especially be helpful and reduce response time if you need to copy or move assets from one bucket to another.

An alternative way to split your data is to use “include” and “exclude” parameters. Again by using the AWS Command Line, you separate operations per file name this time.

However, this method requires at least a basic knowledge of programming. For that reason, despite being effective, this transfer acceleration approach doesn’t suit an average user.

4) Zip your files

Zipping your files may look like something more trivial but also more doable. Think about compressing your files to improve the transfer speed and benefit your bandwidth.

This strategy is especially useful if you need to move a single large file like a video of 50 GB, which you cannot split into smaller parts. EzyZip or Extract.me are good online archivers you can use. Btw, when choosing the format to use for your archives, bear in mind the tools that will read them.

5) Choose the right tool

Still, a zipper is more of a temporary solution for you. It won’t work as a tangible business solution, for example, if you work with international clients and regularly transfer large files for them.

In this case, you’d better adopt a professional acceleration tool which will optimize the speed of your AWS storage once and for all. Fortunately, here you can select from an extensive offer of acceleration software.

List of S3 transfer managers

Amazon S3 Transfer Acceleration

Despite being new, Amazon S3 Transfer Acceleration has already gained its recognition among S3 users. Thus, this should be your top choice if you’re looking for a good tool to improve your S3 performance.

By statistics, the tool boosts your upload speed from 50 to 500% (!). To achieve this, S3 Transfer Acceleration minimizes the variability in your Internet routing, deals with congestion and glitches. In this way, it tries to cut the distance to S3 for remote apps and set up consistently fast data transfer, regardless of your location.

Moreover, the tool is risk-free. You’re charged for anything extra but the per-GB upload. That is if the accelerator doesn’t impact your transfer speed, you won’t have to pay for its use.

Pricing: $0.04 per gigabyte uploaded.

Pics.io Data Migration Tool

If you don’t want (or don’t have time) to mess around with connecting the tool and configuring the upload, you can delegate this task to professionals. And Pics.io migration service suits you best in this case.

With the help of the Pics.io migration tool, you can move data back and forth between Amazon S3 and any other storage. The number of your files, the size, or format doesn’t matter.

Unlike other tools, Pics.io data migration allows you to transfer metadata like keywords, folder structure, and file descriptions next to your files. This is why it’s the best choice if you’re planning to migrate all your library to S3 for the first time.

Pricing: On-demand, depending on the size of your media library and migration complexity.

Amazon CloudFront

This is one more solution to migrate your content to S3 in the fastest and most secure way possible. Amazon CloudFront positions itself as a content delivery network (CDN).

Its main advantage is good performance and advanced security measures, such as field-level encryption and HTTPS support. So choose this solution if you want to upload very sensitive data like your clients’ private info.

Also, mind that Amazon CloudFront requires a particular skill set for deployment. Its UI isn’t really intuitive, while the solution isn’t designed for non-technical users.

Pricing: Its cost differs depending on your region and the file size. The price can vary from $0.085 for the US and up to $0.170 for India.

AWS Snowball

Suitable for large-scale data uploads, AWS Snowball is an offline data migration service. This time, you order a service online, AWS sends you an appliance where you copy your data, and you send it back to the company. Then, Amazon uploads your info for you.

As you see, the tool requires lots of manipulations with your data. So the process may be time-consuming. AWS itself estimates the transfer to complete in around a week. This is why we’d recommend resorting to this solution only if your upload exceeds 10 TB.

Pricing: The cost starts from $300 for 10 days of use.

Rackspace

If Snowball is not an option for you for some reason, think about using Rackspace Technology. To a large extent, it works in much the same way as Snowball but is more affordable. Rackspace is also an official partner of Amazon, so you don’t have to worry about security.

A flip side here is lower performance than in official Amazon services. The tool wasn’t specifically designed for speeding up data transfers. Thus, some users complain about its not ideal work.

Pricing: On-demand because data migration isn’t its main focus.

Mover.io

This is one more tool that will help you upload your data from one storage to another, Amazon S3 included. Mover.io offers you both on-demand and scheduled migration. So feel free to choose the second one when you need more time to finish pre-migration steps.

In collaboration with Microsoft, Mover.io has one more exclusive opportunity during your file uploads to S3. The tool allows you to check detailed logs of your activity, plus the status of your migration.

At the same time, Mover.io is probably one of the most expensive third-party solutions in this niche. And its price grows progressively with the number or size of your assets.

Pricing: 1 TB of data will cost you around $1000.

CloudFastPath

CloudFastPath is another third-party solution in our list to increase transfer speed in your Amazon S3 storage. The tool has a completely different principle of work as compared to all the rest solutions. CloudFastPath uses automated data streams to boost your upload speed.

With its good performance, the tool will help you upload lots of data, the same as large-scale info. Its only weakness is a bit clunky and simplistic interface.

Pricing: The tool will cost you from $200.00 per month.

S3DistCp with Amazon EMR

This is one more upload tool provided by Amazon that your team of developers would like. S3DistCp is an open-source migration tool which is helpful for parallel copying of objects. To put it differently, the tool allows you to copy many files simultaneously without overburdening your database.

At the same time, S3DistCp suits only for file uploads between S3 buckets or at least inside Amazon. So it won’t help you migrate your data from or to another cloud storage. Besides, your tech background is a must in this case for data transfer. And this is another drawback as you understand.

Pricing: Usually included in your S3 costs.

Wrap up

No doubts Amazon S3 is among the most secure and top-performing cloud storage solutions available on the market. Still, even this storage is running with delays from time to time.

In this post, we included the best speed acceleration tips for you to upload to S3 as smoothly and fast as possible. We also advised you on the top software to optimize the speed of your storage. Now it’s your turn to practice our tips and get back to us with the most efficient ones.

And if you’re looking for a good data manager for your files stored in Amazon S3, you should pay attention to our Pics.io DAM. The tool will impress you with good performance, efficient file organization and distribution, and an intuitive interface. And it’s just for starters!